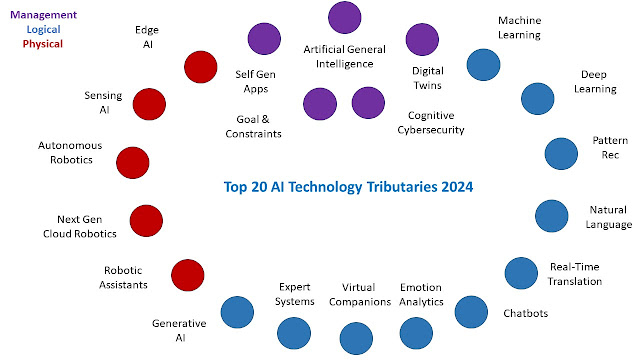

, it is also essential to know all the technology tributaries. These tributaries offer strengths that can contribute to business outcomes, but they also have challenges in implementation and operation. I gathered the most common AI technologies,

and briefly described where to use them and where to avoid or bolster use. Often, organizations combine several of these tributaries to accomplish their desired outcomes and keep them current in a more automatic way. Keep in mind these tributaries are maturing fast and independently today, so organizations will have to package a number of these to reach desired outcomes that are of a higher order. I am hoping this enumeration will assist in spending your 2024 AI budget.

Figure 1 AI Tributaries Logical

Machine LearningDefinitionMachine learning is the kind of AI that teaches computers to learn from experiences represented by data and information that does not rely on a predetermined equation or sets of rules. Machine algorithms adaptively improve their performance as the number of data samples increases, thus increasing the learning process.

When to UseUse machine learning when you can't code rules, such as human tasks involving recognition where there are many variables with frequent change.

When Not to UseWhen the data is problematic, including too much noise, too dirty, or grossly incomplete.

Deep LearningDefinitionDeep learning is a distinct/specialized form of machine learning that attempts to learn like humans by identifying objects and linking them to each other using a neural network, which is layered with interconnected nodes called neurons that work together to process and learn from data. It's a form of patterned learning.

When to Use Use learning is used where there is a large amount of data available and there is a requirement for higher accuracy. Typically, deep learning learns from its mistakes and includes the lessons learned.

When Not to UseDeep learning has a high computational cost that must be factored into solutions. There is, of course, a high dependence on the data quality. The scope of the data it is trained on may limit its ability to deal with unforeseen consequences.

Pattern Recognition/PerceptionDefinitionPattern recognition is the automated recognition and regularities in data of various sorts. These patterns can be classified and leveraged to make decisions or predictions. New and emergent patterns can be detected for further analysis.

When to UsePattern recognition is critical in improving comprehension of the intricacies of complex problems. It is beneficial for recognizing objects in images, scanning, and photo-related interpretations.

When Not to UseAgain, the state of the data is critical, but dealing with significant variations in the data may disqualify pattern recognition as a solution.

Natural Language Processing (NLP)DefinitionNLP is a form of AI that allows computers to understand human language in any form and leverage it in a more seamless human-computer experience.

When to UseNLP is a significant bridging mechanism between humans in their own language and computers. It is often used for computers to read text or hear speech to interpret and measure sentiment, helping to identify important words/phrases.

When Not to UseNLP is not as helpful when a particular language is inconsistent or ambiguous, particularly regarding sarcasm and culture.

Real-time Universal TranslationDefinitionReal-time Translation helps people translate one language to another instantly. People speaking differently can have a conversation or meeting in different languages with minimal delays or issues with accuracy.

When to UseUniversal translation is an essential tool for breaking down language barriers and facilitating cross-cultural communication.

When Not to UseUT cannot correctly translate expressions, idioms, slang, abbreviations, or acronyms. Additionally, it cannot provide an accurate yet creative translation. Therefore, it should be used with caution.

ChatbotsDefinitionA chatbot is a software application or web interface that aims to mimic human conversation through text or voice interactions. Chatbots that represent real-world interactions and incremental learning are the most effective.

When to UseChatbots are used in timely, always-on assistance for customers or employees. Often, they are helpful in social media, messaging, and phone calls.

When Not to UseChatbots are not helpful when addressing customer grievances as every individual is unique, and the problem could be complex over a more extended period than any one business event or transaction.

Real-time Emotion Analytics (EA)DefinitionEmotion analytics collects data and analyzes how a person communicates verbally and nonverbally to understand a person’s mood or attitude in the context of an interaction. EA provides insights into how a customer perceives a product or service.

When to UseEA can help you improve the usability, engagement, and satisfaction of your users, as well as identify and address any pain points or frustrations.

When Not to UseLike other forms of technology, emotional AI can display biases and inaccuracies. Consumers have to consent to being analyzed by emotional AI, which may present some privacy concerns.

Virtual CompanionsDefinitionA virtual companion is an embodied AI character that advances multiple forms of companionship. It includes not only the experience of togetherness with an AI character but can also augment the nurturing of companionship between people or animals.

When to UseThese interactive programs are accessible through the web or mobile, that serves as a companion or partner for therapy and mentorship. Early uses are about a boyfriend or girlfriend relationship, fulfilling some of the functions usually associated with these relationships, but also used for elderly care—emerging benefits around mentorship and collaboration in business.

When Not to UseBe careful, as they can cause harm, such as hurting users emotionally or giving dangerous advice. Sometimes, perpetuating biases and problematic dynamics are a result of their use.

Expert SystemsDefinitionExpert systems leverage AI to simulate the judgment and behavior of a human or an organization with expertise or experience in a particular field.

When to UseExpert systems can be used standalone or to assist non-experts. It's helpful when skills are scarce locally, expensive, error-prone, and people are too slow.

When Not to UseExpert systems do not leverage common sense and often lack creative or sensitive responses that humans can deliver. Often, expert systems lack explainability.

Generative AIDefinitionGenerative AI refers to models or algorithms that create brand-new output, such as text, photos, videos, code, data, or 3D renderings, from the vast amounts of data they are trained on. The models 'generate' new content by referring to the data they have been trained on, making new predictions and output.

When to UseGenerative AI creates new and often original content, responses, designs, and synthetic data. It’s valuable in creative fields and novel problem-solving while generating new types of outputs.

When Not to UseGenerative AI can provide helpful outputs based on users' queries, but sometimes, the material generated can be offensive, inappropriate, or inaccurate. Human guidance can correct the result and put it into context.

Physical

Edge AIDefinitionEdge AI is all about putting intelligence closest to any device or edge computing environment. Edge AI allows computations to be done close to where the data is collected rather than at a centralized cloud computing facility or offsite data center.

When to UseWhen speedy, always-on, and decisions are necessary, close to where data is sensed and collected.

When Not to UseEdge AI devices may not all have the same level of encryption, authentication, and protection, therefore making them more vulnerable to cyberattacks. Scalability is also a challenge.

Sensing AI DefinitionSensing AI is an AI awareness that is driven by one or many human-replicated sensing capabilities such as voice, vision, touch, taste, or smell. Sensing AI gives a presence in one or more physical contexts to present data to the logical side of AI.

When to UseAny time in context computing will assist; any or all of these senses will give immediate and vital feedback to computing systems and humans. These are often used in dangerous environments.

When Not to UseWhen Physical senses do not contribute to desired outcomes or where immediate feedback is unnecessary.

Autonomous Robotics (AR)DefinitionARs are autonomous intelligent machines that can perform tasks and operate in environments independently without human intervention.

When to UseArs are great at automating manual or repetitive activities in corporate or industrial settings, but they also are great at working in unpredictable or hazardous environments.

When Not to UseRobots only do what they are programmed to do and can't do more than expected unless some kind of learning AI powers them.

Next-Gen Cloud RoboticsDefinitionCloud robotics is the use of cloud computing, cloud storage, and other internet technologies in the field of robotics. One of the main advantages of cloud robotics is its ability to provide vast amounts of data to robotic devices without incorporating it directly via onboard memory.

When to UseCloud-based robot systems are capable of collaborative tasks. For example, a series of industrial robotic devices can process a custom order, manufacture the order, and deliver it all on its own—without human operators.

When Not to UseTasks that involve real-time execution require on-board processing. Cloud-based applications can get slow or unavailable due to high-latency responses or network hitch.

Robotic Personal AssistantsDefinitionA robot personal assistant is an artificial intelligence that assists you with routine domestic chores and improves your quality of life.

When to UseToday, these robots are used in specialized services such as cleaning.

When Not to UseFor tasks that require empathy or dynamic adaptability,

Management & Control

Artificial General Intelligence (AGI)DefinitionAGI represents generalized human cognitive abilities on software that can solve an unfamiliar task.

When to UseIf realized, an AGI could learn to accomplish any intellectual task humans or animals can perform. Alternatively, AGI has been defined as an autonomous system that surpasses human capabilities in most economically valuable tasks.

When Not to UseIt is not here yet.

Digital TwinDefinitionA digital twin is the digital representation of a physical object, person, or process contextualized in a digital version of its environment. Digital twin links the logical side of AI and the physical side of AI in an artificial environment to visualize, simulate, and try actions without real consequences, ultimately promoting better decisions by humans or machines.

When to UseDigital twin technology enables you to create higher-quality products, buildings, or even entire cities. By creating a simulation of a system or a physical object, designers can test different design scenarios, identify potential design flaws, and make improvements before construction begins.

When Not to UseIt is challenging to maintain a digital asset. Many digital twin efforts fail because the digital assets don't receive the same maintenance effort as the physical ones. The digital twin requires consistent upkeep, significant observation, and time to document all real-time changes.

Smart Self-Generating/Adaptive Applications, Processes and JourneysDefinitionSelf-adaptive software systems can adjust their behavior in response to their perception of the environment and the system itself. Applications, processes, and journeys coordinate competent and not-so-smart resources and must constantly be tweaked to stay current with needs.

When to UseWhen a system or process supports emerging conditions and desired outcomes.

When Not to UseWhen the system or process exhibits long-term stability

Goal-Driven & Constraint Behavior

DefinitionWhen Management goals change to reflect the latest thinking or emerging governance constraints, systems and processes seek these goals within governance boundaries.

When to UseWhen volatility is a crucial consideration, or there is a robust environment of emergence

When Not to UseWhen stability creates a Constance.

Cognitive Cybersecurity DefinitionCognitive security is the interception between cognitive science and artificial intelligence techniques used to protect institutions against cyberattacks.

When to UseWhen bad actors generate intelligent attacks

When Not to UseIt is not optional today and is part of the intelligent infrastructure

Net; Net:It is essential to understand all the flavors of AI so that solutions can leverage AI where it makes sense in the current and future business environments. The AI tributaries will combine into solutions that will be more business or consumer-ready. Leading organizations will not wait long to take advantage of these tributaries and emerging combinations. Even the following organizations need to understand these tributaries to ask the right questions to vendors or internal developers. AI is shape-shifting, so let's stay on top of this emerging movement.

Additional Reading: Definition of AI .jpg)